No, GPT-3 cannot be "taught" with prompting

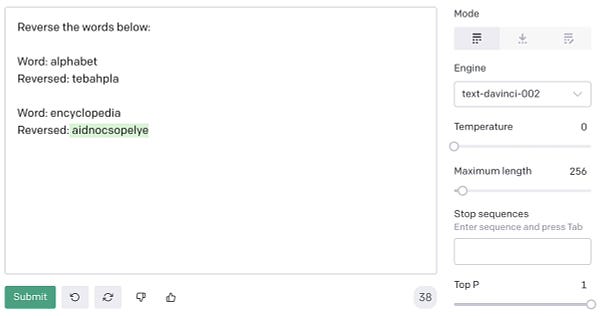

A couple of days back I saw a twitter thread which said we can teach GPT-3 to do something which it could not previously do well, reversing a string.

While the method itself is very innovative(I think it can be used to solve a lot of problems), it actually does not teach GPT-3 anything.

I had done some of these experiments when GPT-3 was released and my experience was it was mostly hit and miss.

So I tried a couple of examples and as expected, GPT-3 had not learnt anything.

My belief is that you cannot “teach” GPT-3 because GPT-3 has not “learnt” anything. It does some jobs well and lets explore more on how to make it work well for our tasks rather than worry too much about if we are close to AGI or GPT-3 is learning etc.